Chapter 11: Iterative Development

Previous chapter: 10 MoSCoW Prioritisation

11 ITERATIVE DEVELOPMENT

11.1 Iterative Development Overview

Each cycle should:

- Be as short as possible, typically taking a day or two, with several cycles happening within a Timebox

- Be only as formal as it needs to be - in most cases limited to an informal cycle of Thought, Action and Conversation

- Involve the appropriate members of the Solution Development Team relevant to the work being done. At its simplest, this could be, for example, a Solution Developer and a Business Ambassador working together, or it could need involvement from the whole Solution Development Team including several Business Advisors

Each cycle begins and ends with a conversation (in accordance with DSDM’s Principles collaborate and communicate continuously and clearly). The initial conversation is focussed on the detail of what needs to be done. The cycle continues with thought - a consideration of how the need will be addressed. At its most formal, this may be a collaborative planning event, but in most cases thought will be limited to a period of reflection and very informal planning. Action then refines the Evolving Solution or feature of it. Where appropriate, action will be collaborative. Once work is completed to the extent that it can sensibly be reviewed, the cycle concludes with a return to conversation to decide whether what has been produced is good enough or whether another cycle is needed. Dependent on the organisation and the nature of the work being undertaken, this conversation could range from an informal agreement to a formally documented demonstration, or a “show and tell” review with a wider group of stakeholders.

As Iterative Development proceeds, it is important to keep the agreed acceptance criteria for the solution, or the feature of it, in clear focus in order to ensure that the required quality is achieved without the solution becoming over-engineered. An agreed timescale for a cycle of evolution may also help maintain focus, promote collaboration and reduce risk of wasted effort.

11.2 Planning Iterative Development

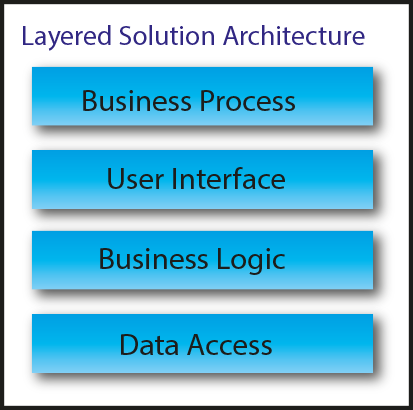

During Foundations, it is very important to decide on a strategy for development that encompasses how the potentially large problem of evolving a solution can be broken down into manageable chunks for delivery in Timeboxes. DSDM identifies two ways in which this may be considered. The first describes a requirement focus, the second describes a solution focus. It is important to note that neither is better than the other and equally valid alternative ways of approaching Iterative Development may be available; the key is to find the most appropriate development strategy for the project through discussion. DSDM does not dictate how the strategy should be formed and agreed, just that there should be a strategy for development.

11.2.1 Requirement focus

DSDM identifies that the requirements can be of three different types:

- Functional

- Usability

- Non-functional

(Note that traditionally, usability is considered to be a type of non-functional requirement but is elevated into a class of its own because of its impor tance to the business user of the final solution and to facilitate business interaction with the rest of the Solution Development Team in this important aspect of solution design)

An individual cycle of Iterative Development or even the work of a Timebox may focus on evolving the solution to meet one or more of these requirement types.

On a simple feature, a cycle may encompass all three perspectives at the same time. However, where iterative development of a feature involves many cycles, involving several different people, the team may decide to focus a cycle on one or perhaps two specific perspectives rather than covering all of them at the same time .

|

For example: The team may decide to focus early cycles on the functional perspective of a requirement – ensuring, and demonstrating, that the detail of the requirement is properly understood and agreed. This may be followed by cycles focussed on usability – ensuring interaction with the solution is effective and efficient. Later cycles may then focus on ensuring the required non-functional quality (e.g. performance or robustness) is achieved, that all tests pass as expected, and all acceptance criteria for the feature are met. |

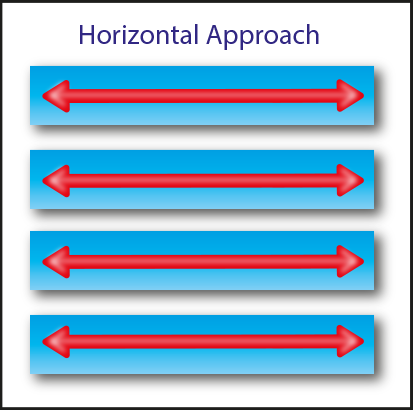

11.2.2 Solution focus

Horizontal Approach

|

For example: Projects where a horizontal approach may be appropriate:

|

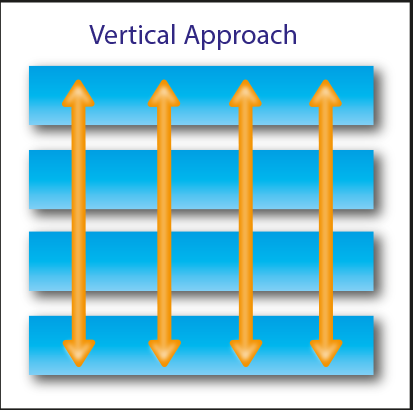

Vertical Approach

|

For example: Projects where a vertical approach may be appropriate:

|

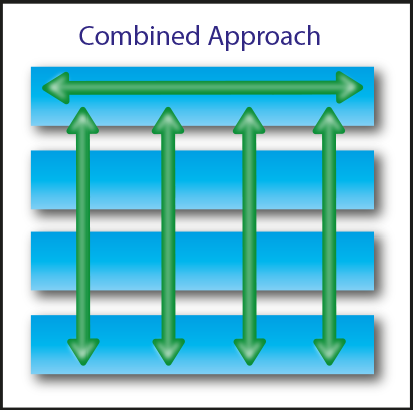

Combined Approach

|

For example: Projects where a combined approach may be appropriate:

|

11.3 Controlling Iterative Development

Each Iterative Development cycle is intended to result in an incremental change to the Evolving Solution that brings it, or more probably a feature of it, progressively closer to being complete or “done”.

It is quite possible, however, that the review of such an incremental change may reveal that the solution has evolved in a way that is not right. Under such circumstances it is important, wherever possible, to have the option to revert to the previously agreed version and undertake another cycle based on what has been learnt and on any new insight arising from the review. Configuration Management of the solution is therefore an important consideration.

11.4 Quality in Iterative Development

One of the defining principles of DSDM is to never compromise quality. To achieve this we should (amongst other things) define a level of quality in the early lifecycle phases and then ensure that this is achieved. The challenge then is how to meaningfully define quality and then measure it in an iterative context.

11.4.1 Quality criteria

Quality criteria need to deal with required characteristics of the product/feature with these being driven by the context in which the product/feature is going to be used.

|

For example: For a house brick

|

|

For example: For a software application

|

11.4.2 Acceptance criteria

By the time Iterative Development of the solution starts, the main deliverables will already have some acceptance criteria associated with them from the Foundations phase. Although it might not be practical or even appropriate to get to this level of detail during Foundations, by the time development of a particular feature starts acceptance criteria should be objective and quantified (rather than subjective).

|

For example: An ‘on-line purchase’ feature would need a defined set of inputs (e.g. product codes and purchase volumes), a planned response (e.g. calculating and displaying the item and total costs whilst separately calculating tax) and the context within which this is happening (e.g. checking the stock levels needed to fulfil the request). |

If the acceptance criteria are vague or subjective (as may be the case at the end of Foundations) then more conversation is needed to agree on the specific details.

Note that this information informs both what needs to be built and how it will be assessed, so it is essential it is done before work starts.

Thought is applied to both how the solution is built and how to verify that it has been built correctly. Where the DSDM structured timebox is used (Chapter 13.3), the detailed work on acceptance criteria takes place primarily in the Investigation step. Where a less formal structure is used, it takes place as a first step in addressing a particular requirement once it has been selected, whenever that occurs within the Timebox.

11.4.3 Validation and verification

Validation asks ‘Are we building the right thing?’ whilst verification asks ‘Are we building the thing right?’

In an Iterative Development context, validation does not need to be a separate activity as the process of collaborative design of the solution with direct involvement of Business Ambassador and Business Advisor roles means this happens naturally. However, verification activity still needs to be explicitly considered, to ensure it is fully integrated in the Iterative Development cycle. How this will be achieved is part of the development approach and should be described in the Development Approach Definition if appropriate.

Having agreed how the quality will be verified action ensures verification is carried out effectively and efficiently. The person responsible for producing the product will naturally carry out his or her own assessments as part of that development activity. Simultaneously, a separate person (in most cases the Solution Tester) needs to prepare for the independent verification activity. This can be just as time-consuming as making the product (in some cases more time-consuming).

11.4.4 Static and dynamic verification (reviews and testing)

There are two broad classes of verification – static and dynamic. Static verification involves inspecting a deliverable against its acceptance criteria or agreed good practice. The advantage of this type of verification is that it is based on observation alone and so could not cause harm to the product being inspected.

Some items can only be statically verified.

|

For example: Documents, or the dimension of a house brick |

Other items might be verified statically and/or dynamically.

|

For example: The components of an engine can be reviewed against the blue print to statically test them. Running the engine allows it to be dynamically tested. |

As static methods present no risk that the deliverable will be damaged by the process (presuming non-invasive methods), there are potential advantages to inspection even when an item could be tested dynamically.

Static verification - reviews

Reviews can range from informal peer reviews through to highly structured and formal reviews involving experts or perhaps groups of people. The level of formality is often driven by the nature of the product and by corporate or regulatory standards.

Some types of static testing can be automated.

|

For example: On an IT project, code scanners can check that agreed coding standards have been followed. This can be particularly useful for initially checking security aspects of a solution. |

The Technical Coordinator is responsible for the technical quality of the solution by “ensuring adherence to appropriate standards of technical best practice” and so should ensure that:

- Such practices are appropriate and understood by all

- An appropriate regime of peer review, with an appropriate level of formality, is part of the teams working practice

- Appropriate, contemporary evidence of review activity is captured as required

Whilst the rigour of a review can vary, mostly they share certain key qualities:

1. There are key roles that need to be fulfilled:

- The producer(s) of the item being reviewed (author(s) in the case of a document)

- The reviewers

- A review moderator, where appropriate, for very formal reviews

2. All reviews require time to be carried out. A simple, informal review may be peers gathering at a desk and reviewing the item together, whereas a formal review needs to properly planned, preferably as a Facilitated Workshop

3. Every review involves assessing the product against criteria, which may be specific to that item (defined as acceptance criteria) or general to an item of that kind (general standards or good practice such as those defined by the development approach). The criteria need to be agreed (and probably documented) in advance to gain the most benefit, but they can of course also evolve over time to accommodate the current situation and appropriate innovation in working practice

4. Every review must reach a conclusion. Commonly there are three potential results:

- The item is fit for purpose and no fur ther action is required

- The item needs minor amendments to make it fit for purpose. In this case, the review group might nominate one individual to check that required changes are made

- The item needs major amendments before it is fit for purpose. In this case the item typically needs to be fully reviewed again after being reworked

Reviews may occasionally result in no clear outcome. In this case the people involved need to collaborate and if necessary bring other people in to the discussion in order to reach an agreed outcome.

Dynamic verification - testing

The act of dynamically checking an item is commonly known as testing. There are three broad classes of tests which are useful to consider when dynamically verifying a deliverable:

- Positive tests check that a deliverable does what it should do

- e.g. when you add an item to your basket on a web site the item does appear there

- Negative tests check that a deliverable doesn’t do what it shouldn’t do

- e.g. if you put the wrong key into a padlock, you shouldn’t be able to open it

- ‘Unhappy path’ tests check the behaviour of the deliverable when unusual or undefined things happen

- e.g. what happens when a car engine overheats? And is that behaviour ok?

Based on these test classes, with some thought it is usually possible to think up multiple positive, negative and unhappy path tests for each acceptance criterion. Every test would typically have the following:

- A defined starting state

- A defined set of actions which we will carry out

- An outcome which we expect to see

Planned and exploratory testing

Preparing defined tests in advance has useful benefits:

- Firstly, you can ensure that you consider each of the acceptance criteria and testing perspectives to give you the best chance of identifying high-value tests

- Secondly, you can prioritise your tests (using MoSCoW) to make sure that you cover the most important ones during execution

- Finally, you can prioritise the test execution, for example to target areas of risk

Combining these factors (good coverage, highest value criteria and highest areas of risk) ensures that the best project value is derived from the testing activity.

It is possible to test without preparation (this is typically known as exploratory testing) but it is a technique that requires considerable skill and experience with testing and extensive procedural knowledge of the way the solution will be used in order to be effective. It is critically important that any defective behaviour identified as a result of exploratory testing can be replicated. This means that the starting state, the actions taken and the results (both actual and expected) still need to be defined but this is often difficult to achieve because it has to happen after the problem has been identified. For this reason, a balance between planned and exploratory testing is advisable. See below for further considerations on the use of exploratory testing.

Manual and automated testing

In recent years, test automation tools have improved significantly. These now range from shareware, through integrated development environments, to wide-ranging commercial tools. Given the rapid pace associated with Iterative Development, the effective and efficient use of automation is essential for testing.

Automated tools are very effective when dealing with precise inputs and predictable outputs, but are less effective when such precision cannot be achieved and/or when judgement is required about whether a deviation from what is expected is acceptable or not. For these reasons, a blend of manual and automated testing is usually required.

Automated tests can take considerable time to prepare (though the best tools minimise this) and are used to run the same tests time and again. However, it is important not to become blindly reliant on automated tests, because by running the same tests the same types of problems appear, whilst other errors outside these tests remain undetected. Consequently, there is still value in manual testing to quickly test new or specific aspects of the solution. Tests should only be automated if there is a high degree of confidence that they will need to be repeated multiple times (though in Iterative Development this is highly likely in most cases). Manual testing should focus more on exploratory aspects (exploratory testing) whilst automated testing is typically a form of planned testing. When exploratory tests find a significant problem they should be reverse engineered into a planned test and then automated where practicable.

Documentary evidence

Whatever methods of testing are used, in some circumstances it may be essential or mandatory to capture evidence to show what was done and what behaviour was observed. Review of test results may reveal trends that can be addressed through evolving either the solution design or development standards. Very importantly, it also allows a project to demonstrate due diligence to an auditor or external regulator, if appropriate.

Roles and responsibilities for testing activity

The Technical Coordinator for the project is responsible for the overall quality of the solution from a technical perspective and so is responsible for “ensuring that the non-functional requirements are reasonable at the outset and subsequently met”.

The Solution Tester is responsible for carrying out all types of testing except for:

- Business acceptance testing: that is the responsibility of Business Ambassador(s) and Business Advisors

- Unit testing of the feature: that is the responsibility of the Solution Developer

Note: As part of a collaborative team, the Solution Tester will be supporting other roles to fulfil their testing responsibilities by providing testing knowledge and expertise.

11.4.5 Are we ‘done’ yet?

After verification has been under taken (whether statically or dynamically) the key question is whether or not the acceptance criteria have been met in a meaningful way. It may be obvious that the criteria have clearly been met or have clearly failed. In other cases more conversation is needed to decide whether the team are confident that the solution is fit for purpose or not, based on what has been observed. This could imply that the acceptance criteria were not sufficiently understood or defined. Alternatively, even though all the ‘Must’ criteria may have been met, the product may have failed against so many other, lesser criteria that it is unlikely it will actually deliver the benefit needed from it.

If the solution has unconditionally met all the acceptance criteria, then it is ‘done’. Where only some of the criteria have been met, then the product may need to be evolved further to ensure that more criteria are met next time it is validated. More conversation will be needed, followed in due course by more thought and action to implement what has been agreed upon.

Once an item is agreed as ‘done’ it is advisable to record any discretionary acceptance criteria which remained unfulfilled. This information is valuable as:

- It may have a knock-on effect on other parts of the solution or work yet to be done

- The team may choose to spend time later on fixing these criteria rather than implementing lower-value features

Remember that an accumulation of less serious defects may eventually have an impact on the Business Case which is not clearly shown by any one failed criterion in isolation.

11.5 Summary

Iterative Development in a project context needs up-front thought. However this is not about big design up-front (BDUF) or detailed planning. It is more a consideration of the strategy for development. The DSDM philosophy states that projects deliver frequently so the ‘big picture’ needs to be broken down into small manageable chunks to facilitate this frequent delivery.

The principles: focus on the business need, deliver on time, collaborate and never compromise quality must also be considered as these drive how the Solution Development Team works. How work is planned at the detailed level, ensuring the right people are involved at the right time and assuring quality as development progresses, requires the whole team to be bought in to a sensible strategy for development that they help shape during the Foundations phase. Where appropriate, this will be documented in the Development Approach Definition.

For small, simple projects delivering conceptually simple solutions, consideration of these issues may take an hour or two and be based on simple conversation and ‘round the table’ agreement. However, as a general rule, the need for a more formal and more carefully considered Iterative Development strategy increases with the size and complexity of the project organisation and the solution to be created.

Quality assurance is a key part of delivering a solution that is fit for business purpose. However, the formality and rigour of testing will depend very much on the nature of the project and the standards laid down by the organisation.